Initial point generation for optimization through facet reflections

- This article is an overview of the use of facet reflections for bringing an arbitrary point outside of a convex polytope to inside.

- You can download the MATLAB codes for the examples here.

- See “Finite convergence into a convex polytope via facet reflections” for more information.

Many algorithms for constrained optimization problems need a feasible initial point from which to start. If the constraint set is a convex polytope, it is always possible to bring an arbitrary point outside of the polytope to inside by performing only reflections over the hyperplanes defining the facets [1]. Application of such a reflection algorithm provides a very efficient way to bring an arbitrary point to inside a region defined by constraints.

For convex and nonlinear constrained optimization problems, interior-point methods and trust-region methods are some of the most important classes of algorithms. In such algorithms, a feasible point is obtained by utilizing methods such as the two-phase approach and self-dual embedding techniques [2, 3]. These methods rely on secondary or modified optimization problems. In contrast, the facet reflection algorithm is an exceedingly simple, and computationally inexpensive, alternative for finding an interior point for linear inequality constrained problems. Below are two examples of applications of the algorithm.

For convex and nonlinear constrained optimization problems, interior-point methods and trust-region methods are some of the most important classes of algorithms. In such algorithms, a feasible point is obtained by utilizing methods such as the two-phase approach and self-dual embedding techniques [2, 3]. These methods rely on secondary or modified optimization problems. In contrast, the facet reflection algorithm is an exceedingly simple, and computationally inexpensive, alternative for finding an interior point for linear inequality constrained problems. Below are two examples of applications of the algorithm.

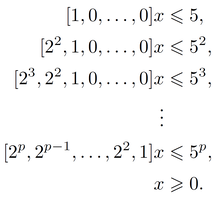

Example A: Consider the Klee-Minty polytopes in \(R^p\) defined as

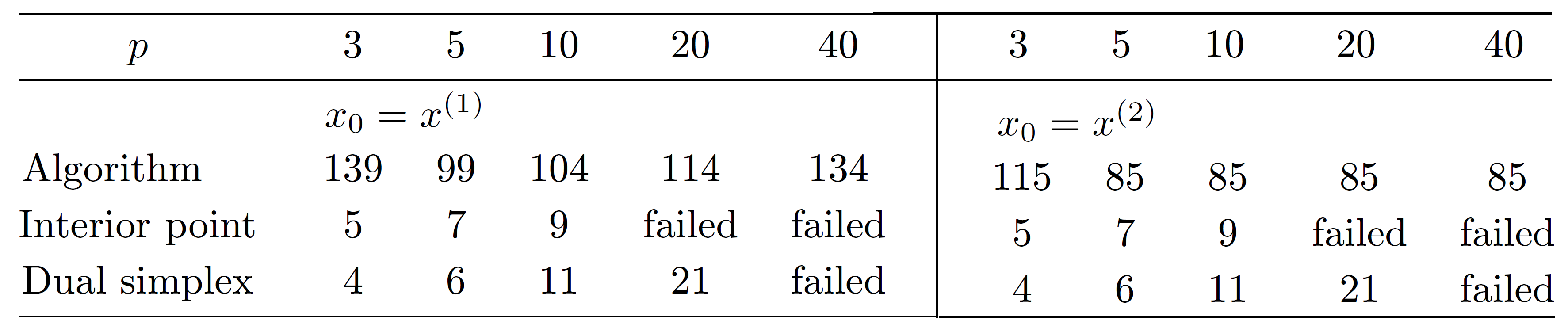

We consider moving two initial points, \(x^{(1)}=(-250, -250, …, -250) \) and \(x^{(2)}=(250, 250, …, 250) \) to inside the polytope as examples of a phase 1 method for the two-phase approach to solve optimization problems with infeasible starting points. The performance of the reflection algorithm is compared with the interior point and the dual simplex algorithms. The results are tabulated in Table 1 for different values of p.

For small p, the interior point and dual simplex algorithms complete the task in fewer steps than the reflection algorithm. However, the interior point algorithm fails for p > 15, as the solution converges to an infeasible point. Truncation error due to the limited number of bits available for the solver leads to the loss of feasibility. The dual simplex method fails for p > 35, as the constraint matrix coefficients become too large in magnitude. In contrast, the reflection algorithm completed the task even for p = 1000, finishing 1094 iterations in 0.5 seconds for \(x^{(1)}\) (on a 64-bit laptop with a Core i7 - 4 core processor at 1.8 GHz). Unlike other methods, the algorithm does not utilize already-satisfied half spaces, as evidenced in the case \(x^{(2)}\).

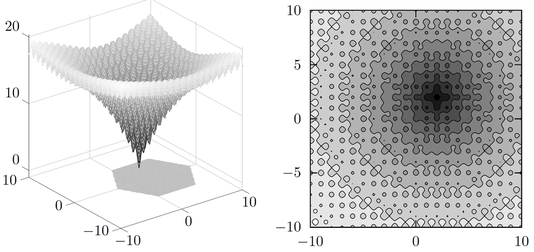

Example B: The Ackley function is used as a performance test function for optimization algorithms [4]. Here we consider the Ackley function of the form

\( f(x)=-20exp[- \sqrt{0.02((x_1-2)^2+(x_2-2)^2)}]-exp[0.5(cos2\pi(x_1-2)+cos2\pi(x_2-2))]+e+20\),

to find the global optimal of \(f(x)\) inside a regular convex hexagon, where \(x=(x_1, x_2)\). Function \(f(x)\) has many local minima, as shown in the contour plot in Figure 1 below. Its global minimum is \(f(2,2)=0\). Depending on the initial point, optimization algorithms can converge to any of the local optimal. If the initial point is infeasible, there is no way to establish a search region with existing approaches.

\( f(x)=-20exp[- \sqrt{0.02((x_1-2)^2+(x_2-2)^2)}]-exp[0.5(cos2\pi(x_1-2)+cos2\pi(x_2-2))]+e+20\),

to find the global optimal of \(f(x)\) inside a regular convex hexagon, where \(x=(x_1, x_2)\). Function \(f(x)\) has many local minima, as shown in the contour plot in Figure 1 below. Its global minimum is \(f(2,2)=0\). Depending on the initial point, optimization algorithms can converge to any of the local optimal. If the initial point is infeasible, there is no way to establish a search region with existing approaches.

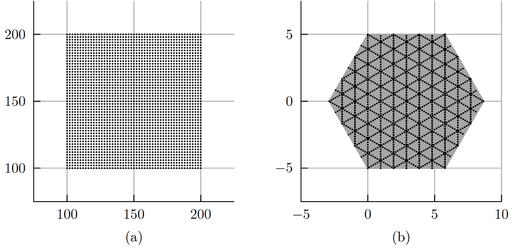

Since reflections are global isometries, we expect the reflection algorithm to preserve some of the structure of the set of initial points. Here, we bring a 51 X 51 grid of points, as shown in Figure 2(a), to inside the hexagon. It takes less than 1/3 of a second to complete 30 iterations to bring all 2601 points inside. The corresponding mapping is shown in Figure 2(b). By evaluating function values, we obtain (2, 1.92) as an approximation to the global optimal. Then we solve the optimization problem using Matlab's fmincon interior-point algorithm. The algorithm converges to the global optimal point (2, 2) in 12 iterations.

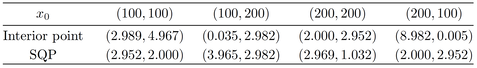

For comparison, we use the four edges of the grid to obtain the optimal solutions using the interior-point method and Sequential Quadratic Programming (the SQP method). The results are in Table 2. None of the points converge to the global optimal solution.

References:

1. D.B. Ekanayake, D. J. LaFountain, and B Petracovici. "Finite convergence into a convex polytope via facet reflections." Applications of Mathematics (2022): 1-18.

2. S. Boyd and L. Vandenberghe. Convex optimization. Cambridge university press, 2004.

3. Y. Ye, M. J. Todd, and S. Mizuno. "An O (√ nL)-iteration homogeneous and self-dual linear programming algorithm." Mathematics of operations research 19.1 (1994): 53-67.

4. D. Ackley. A connectionist machine for genetic hillclimbing. Vol. 28. Springer science & business media, 2012.

1. D.B. Ekanayake, D. J. LaFountain, and B Petracovici. "Finite convergence into a convex polytope via facet reflections." Applications of Mathematics (2022): 1-18.

2. S. Boyd and L. Vandenberghe. Convex optimization. Cambridge university press, 2004.

3. Y. Ye, M. J. Todd, and S. Mizuno. "An O (√ nL)-iteration homogeneous and self-dual linear programming algorithm." Mathematics of operations research 19.1 (1994): 53-67.

4. D. Ackley. A connectionist machine for genetic hillclimbing. Vol. 28. Springer science & business media, 2012.